One Platform to Optimize Your Entire Network

Consolidate network, cloud, and security tools.

Share a unified view of network performance, cost, and security.

Reduce toil and free up resources to innovate.

Leverage consumption-based licensing to reduce spend.

Replace legacy NPM/NMS tools

- Troubleshoot across data center, cloud, campus, and internet.

- Optimize multi-cloud monitoring.

- Streamline capacity planning.

Reduce monitoring costs

- Leverage economies of scale with telemetry consolidation.

- Multiple use cases across security and performance.

- Proactively test user experience and network performance.

A better pricing model

- Flexible licensing

- No overages during peak usage

- Consumption-based pricing

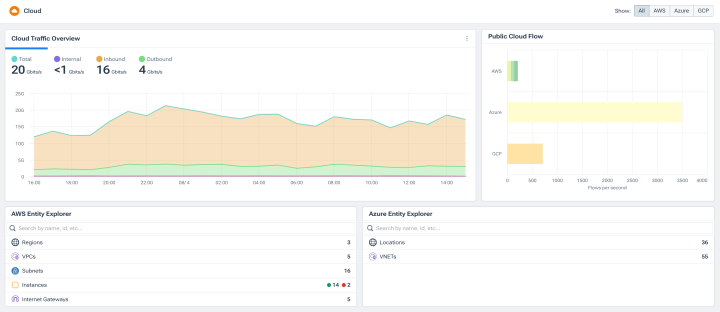

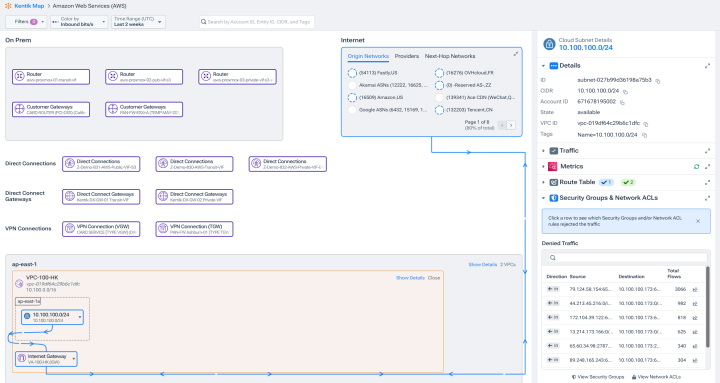

Truly understand hybrid cloud and cost

- Complete visibility from data center to AWS, Azure, Google Cloud, and OCl.

- Analyze cost attribution and usage to right-size hybrid cloud capacity and failover.

- Reduce costs across cloud providers, peering networks, and CDNs.

Network and cloud security

- Use the same telemetry to analyze performance and security incidents.

- Rapid DDoS detection.

- Enforce network policy across data centers and clouds.

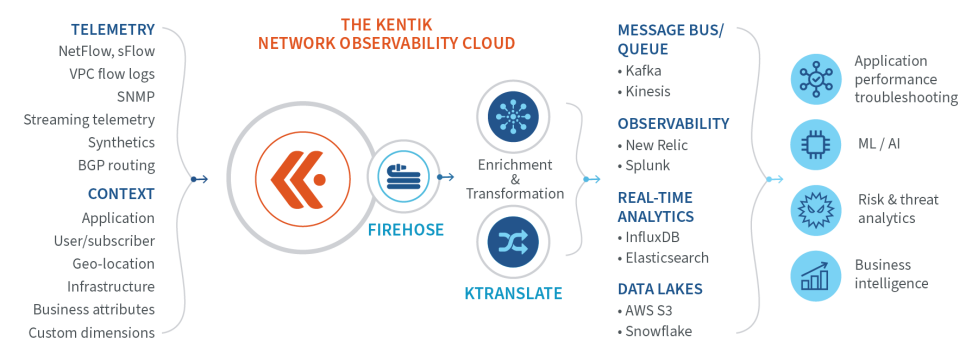

Observability data pipeline

- Unify, enrich, and correlate network observability data and send to other applications.

- Close the network observability data gap in DevOps’ full-stack monitoring.

- Enable multi-source streaming analytics to uncover and transform insights.

See Kentik Firehose.

“Kentik has given us the insight and visibility that we have not been able to achieve through other network performance monitoring products or open source tools. We can see things we simply couldn’t see before.”

Explore the platform

FAQs about Tool Consolidation with Kentik

What kinds of network and observability tools can Kentik replace?

Kentik consolidates capabilities that traditionally required multiple specialized tools: network performance monitoring (NPM), network management systems (NMS), flow analyzers (NetFlow/sFlow/IPFIX collectors), cloud network monitoring, synthetic monitoring, BGP and routing visibility, Kubernetes network observability, and DDoS detection. Most organizations adopting Kentik retire some combination of legacy NPM/NMS tools (such as SolarWinds NPM, ManageEngine OpManager, or WhatsUp Gold), separate flow analyzers, cloud-specific monitoring tools, standalone synthetic testing platforms, and dedicated DDoS or service-provider analytics tools. For deeper comparisons in specific competitive scenarios, see the Kentipedia articles on NETSCOUT Arbor alternatives and Nokia Deepfield alternatives. Kentik typically coexists with rather than replaces full-stack APM and log management platforms — the network intelligence layer complements those tools rather than competing with them.

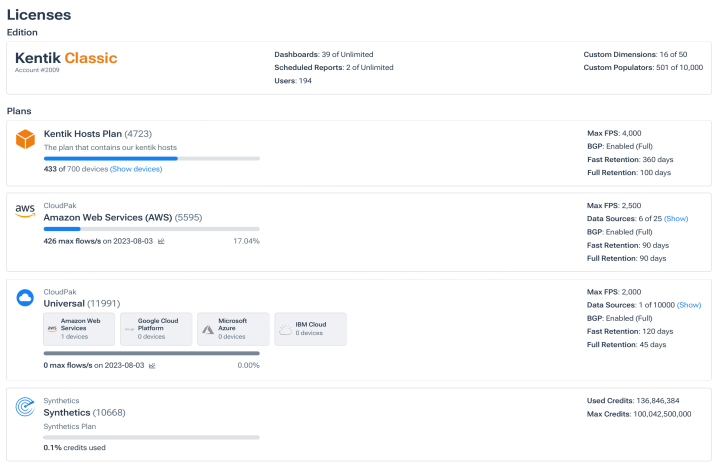

How does Kentik’s consumption-based licensing reduce network monitoring costs?

Kentik’s consumption-based licensing scales with what you actually monitor — flow records per second, device count, synthetic tests, retention period — rather than charging per-device fees that grow regardless of how the network changes. This is particularly valuable for hybrid and multicloud environments where device counts are dynamic (containers, cloud instances, virtual interfaces) and where traditional per-device pricing models become unpredictable or punitive. Teams consolidating legacy tools typically see meaningful cost reduction not just from eliminating redundant licenses but from moving to a pricing model that aligns with actual usage rather than worst-case capacity planning.

Can Kentik replace SolarWinds NPM, LogicMonitor, or other legacy NPM tools?

For many use cases, yes — Kentik provides modern equivalents for the core NPM workflows teams rely on SolarWinds, LogicMonitor, ManageEngine OpManager, and similar tools to handle, including device monitoring, flow analytics, capacity planning, alerting, and reporting. Kentik also extends beyond traditional NPM with cloud-native flow ingestion, internet path visibility, BGP routing intelligence, and AI-driven investigation that legacy tools weren’t designed for. The honest answer depends on which specific workflows your team relies on most. For deeper side-by-side comparisons, see SolarWinds alternatives and LogicMonitor alternatives.

Can Kentik replace AWS CloudWatch or other cloud-native monitoring tools?

Cloud-native monitoring tools (AWS CloudWatch, Azure Monitor, Google Cloud Monitoring, OCI Monitoring) are essential for cloud-specific operational visibility, but each is scoped to its own cloud. Kentik unifies network telemetry across all major clouds plus on-premises and internet environments — making it especially useful for teams running multicloud or hybrid architectures who don’t want to context-switch between cloud-specific tools every time an incident crosses environment boundaries. Most teams keep CloudWatch and other native monitoring for cloud-specific automation and AWS-internal workflows, then layer Kentik on top for cross-environment analytics, BGP and internet path visibility, egress cost analysis, and AI-driven investigation. For a deeper look, see CloudWatch alternatives for multicloud network observability.

Should Kentik replace or complement Datadog, New Relic, or other observability platforms?

Most teams use Kentik alongside Datadog, New Relic, or Dynatrace rather than replacing them. Full-stack observability platforms are excellent for application code, traces, infrastructure metrics, and log management — that’s their design center. Kentik fills the network-layer gap those platforms weren’t built to address, including deep flow forensics, BGP routing analytics, internet path visibility, and cloud egress cost analysis. The combination provides full visibility from application code through the underlying network, with each tool playing to its strengths. For a deeper look at how Kentik complements Datadog specifically, see Datadog alternatives for network teams.

What’s the typical migration path from legacy tools to Kentik?

Successful tool consolidation usually follows a phased approach rather than a hard cutover. The typical path: deploy Kentik in parallel with existing tools (since Kentik is SaaS-delivered with no on-premises footprint), validate that key use cases work in Kentik with real data over 30 to 60 days, identify which legacy tools become redundant once Kentik is established, and decommission them on the normal renewal cycle to avoid wasted license spend. Most teams keep at least one legacy tool running for a defined period during transition to ensure continuity, then progressively reduce dependence as Kentik becomes the primary platform. The right phasing depends on the specific tools in play and the team’s risk tolerance.

What capabilities should a network intelligence platform include?

A network intelligence platform should consolidate the capabilities teams currently get from separate tools: unified telemetry ingestion across SNMP, streaming telemetry, NetFlow/sFlow/IPFIX, VPC flow logs, BGP, and synthetic monitoring; cross-environment analytics that span data center, multicloud, internet, and edge; ingest-time enrichment with BGP, geographic, application, and business-context metadata; AI-driven investigation that explains root cause across all telemetry sources; and consumption-based pricing that scales predictably as the network evolves. Kentik provides all of these in a single SaaS platform. For a broader look at the tool landscape and how to evaluate options, see Best Network Monitoring Tools 2026.

How does consolidating tools improve cross-team collaboration?

Tool fragmentation creates cross-team friction: network teams have NPM, cloud teams have cloud-specific monitoring, security teams have flow analyzers, and incidents that span all three become exercises in screen-sharing and re-explaining context. Consolidating to a unified platform means network, cloud, and security teams share the same data, the same dashboards, and the same investigation workflows. Kentik supports this by enriching every record at ingest with business and operational context, providing role-based access for different teams, and integrating with the ticketing, chat, and notification tools each team already uses. The downstream effect is faster incident response, fewer “is it the network?” debates, and better-aligned capacity and architecture decisions.

How much can teams typically save by consolidating to Kentik?

The savings vary substantially by environment, but they come from three sources: direct license cost reduction (eliminating redundant tools), infrastructure cost reduction (removing on-premises monitoring servers, databases, and collectors), and operational cost reduction (less toil maintaining multiple tools and integrating between them). Teams that have published case studies on Kentik adoption typically frame the ROI in terms of these three categories rather than a single percentage figure, because each organization’s starting point and tool footprint is different. The most reliable way to quantify the opportunity is a structured TCO analysis comparing current tool spend (licenses, infrastructure, operations) against Kentik consumption-based pricing — something Kentik account teams help model during evaluation.